In recent years, both biometric authentication and behavioral authentication have increased in popularity as advances in technology have made them accessible for commodity deployment.

Companies often prefer them when SMS codes, OTP app codes, or authentication hardware like USB or Bluetooth tokens fall short of needed security thresholds, given that:

Biometric authentication and behavioral authentication each address these problems, yet they also come with their own issues—most notably, concerns about user privacy.

Biometric Authentication

Biometric authentication identifies users by verifying the shapes and physical characteristics of their bodies in some way—their fingerprints, faces, and so on.

Unfortunately, these technologies suffer from a key flaw. You can't change the physical properties that they use to identify you—yet they're used across society as definitive markers of your identity.

Worse, they can—in fact—be stolen. For example, you leave fingerprints and images of your face behind you nearly everywhere you go, and the increasing use of biometric authentication provides an incentive for just this kind of theft.

Meanwhile, their growing use in everyday IT authentication means that more and more fingerprints and faces are ending up in identity databases. This is bad if yours are being used for authentication and one of these databases is breached—as has happened on recent occasions.

Behavioral Authentication

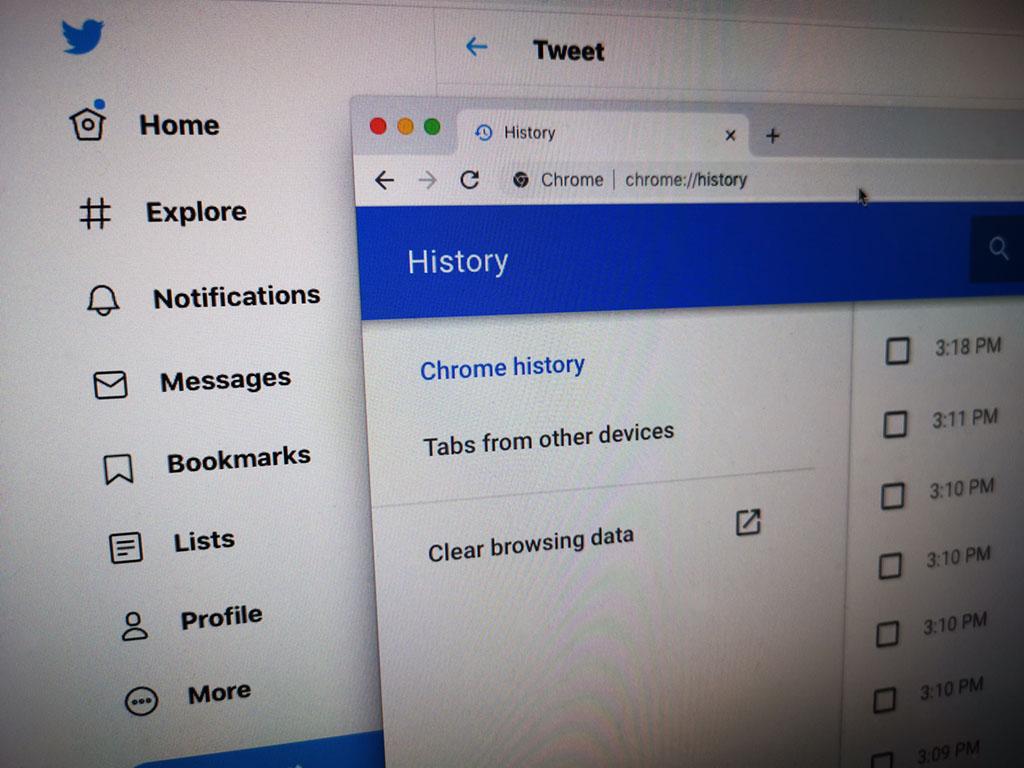

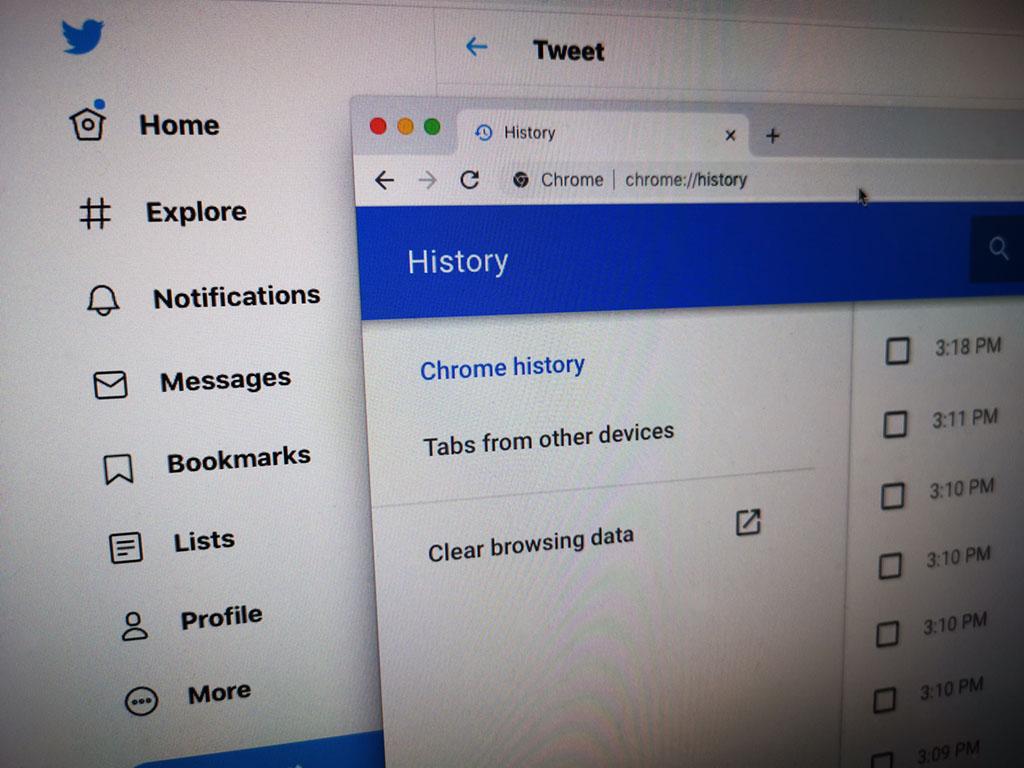

Rather than rely on body shape, behavioral authentication identifies users by observing their activity—the applications they use, the websites they visit, the words they type, and the other people and systems they interact with.

On the other hand, for behavioral authentication to work, your behavior does have to be observed, stored, and analyzed—and that's a lot of personal data to share.

For users, the privacy concerns here are obvious. The fact that what you're doing is being closely observed can be unsettling—and the fact that it’s being stored, doubly so.

This makes behavioral authentication a dicey proposition in most circumstances and a very dicey proposition in high-security contexts—where storing what a privileged user is doing and working on is often an absolute no-no.

Behavioral Biometrics Solves the Problem

Both biometric authentication and behavioral authentication offer key advantages.

Most importantly, they require no memorization, are hard to “lose,” and are often stronger under ideal circumstances than other forms of authentication. Yet both are increasingly hobbled by the larger privacy concerns that they raise—and the risks associated with these concerns.

The solution to this problem, as it turns out, is a technology that leverages the best of both worlds: behavioral biometrics.

Behavioral-biometric solutions like Plurilock™ work by identifying people based only on the biometric components of their behavior—based on tiny micro-patterns in movement that are as unique as fingerprints.

-

Like biometric solutions, behavioral-biometric solutions rely on properties of an individual body for authentication—but only on kinetic characteristics, not on observable body features

-

Like behavioral solutions, behavioral-biometric solutions rely on behavior over time for authentication—but only tiny patterns of motion, not recognizable actions

By taking the best from both worlds, behavioral-biometric systems can uniquely identify users in ways that are always present and are highly individual without compromising or risking user privacy:

-

No body or body shape details

-

No data about social ties, habits, activities, or preferences

-

No biographical data, data to reconstruct, or data to identify a biography

-

Nothing that can be used to impersonate a user on other systems

When introducing someone to behavioral biometrics for the first time, it’s common to hear concerns about privacy. We've all been conditioned by biometric systems and behavioral systems to assume the worst about the privacy implications of either term.

Fear not. Behavioral-biometric technologies specifically address this concern by relying on data that's immeasurably more privacy safe—and virtually impossible for even a committed third party to reuse or recognize. ■