Key Points

- In the machine learning sense, AI has long been in use in cybersecurity

- Its primary use is as a deep pattern recognition technology

- This has led to alert creep, but not workload reductions

- As AI matures, more capable AI systems will lead to SOC load reductions

Current AI systems are very good at recognizing patterns. Active AI agents with multi-system access that can take the place of SOC team members are still ahead of us.

Quick Read

The rapid rise in public awareness of AI over the course of 2023 raised obvious questions about AI's potential usefulness in a variety of areas, cybersecurity being one of these. "When will get get cybersecurity AI?" has become something of a rote question for those not on the front lines.

Those who are on cybersecurity's front lines, however, generally recognize that in the practice of cybersecurity, AI has long been with us as machine learning, with machine learning techniques being mainstays of threat detection, user and entity behavior analytics (UEBA), malware detection, incident triage and response, identity and access management (IAM), phishing detection, analytics, network security, and vulnerability management as a start.

Unfortunately, the implication behind the question currently has a troubling answer. Thus far, the "help" that AI seems to promise—the ability to reduce workloads and create a magical reduction in breach or incident rates by "smartly" taking over to spot and address threats—has not materialized.

The reason for this is simple—current uses of cybersecurity AI are primarily "smart" in the sense that they are very good at recognizing patterns. That is to say, they are able to recognize software activity that "looks like" malware, or user activity that "doesn't look like" a regular user, or other similar recognition tasks. This provides a real and measurable benefit; AI systems are able to detect anomalies that would have slipped past humans.

However, as AI has been deployed in this way across the industry, the complexity of cyber systems and their roles in most organizations has similarly increase; in a sense, the deployment of AI across the industry has merely enabled companies to "keep pace," at best, with the growth in cyber complexity, attack rates, and incident rates where they might otherwise have been overwhelmed.

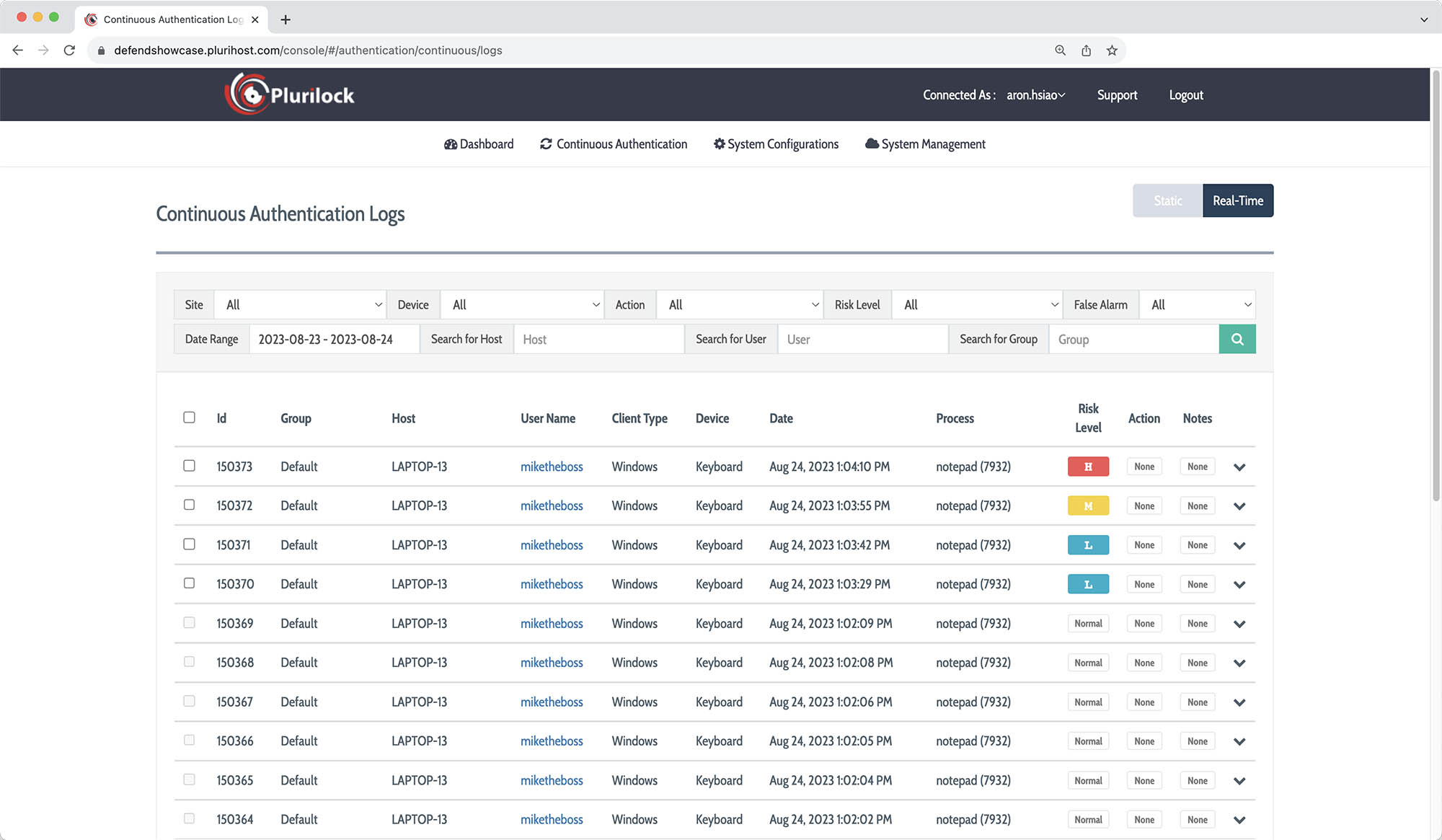

In practice, the practical result of machine learning and AI in cybersecurity has largely been an increase in the number of alerts that must be addressed by security operations center (SOC) teams. While it's true that in some cases action can be take based on AI determinations alone, the real world is messy enough—and potential costs of incorrect action are high enough—that AI cybersecurity systems are often configured to notify of an anomaly rather than to act.

The increase in competence demonstrated by the most recent round of generative AI systems hint at a future in which AI agents may be able to gather alerts from other machine learning cybersecurity systems, synthesize the gathered information, make a judgment, and act. When this happens, we will see AI systems finally reducing—rather than increasing—SOC load. But the hallucination rates of even best-of-breed AI systems at the moment show us why this future isn't here yet.

Further Reading

Need AI Cybersecurity solutions?

Need AI Cybersecurity solutions?

We can help!

Plurilock offers a full line of industry-leading cybersecurity, technology, and services solutions for business and government.

Talk to us today.

Thanks for reaching out! A Plurilock representative will contact you shortly.

What Plurilock Offers

More to Know

AI Cybersecurity Already Exists

Products like Plurilock DEFEND and Plurilock AI Complete exemplify the ways in which AI is applied in cybersecurity today; they generally function as sophisticated pattern recognition systems that can address and analyze volumes or rates of anomaly data that humans could not ingest.

Pattern Recognition, Cybersecurity Help and Hindrance

Today's cybersecurity AI systems are able to spot anomalies that humans would miss, but the net effect in many cases can be an increase in alert load and alert fatigue. The hope for afuture in which agentive AI systems can more effectively triage alerts across systems and take correct action accurately is high.